If You’re Scaling Systems

This Is The Gap You Can’t See

Designing for Human Impact at Scale

Everything can look right—and still be off.

For years, we’ve built systems to answer one question:

“Is it secure?”

But at scale, that’s not enough.

The real question is:

What happens after people interact with it?

That’s the gap this model is designed to address.

The problem isn’t that we lack frameworks. It’s that none of them are designed to measure human impact at scale.

So we keep building systems that work, without fully understanding what they do once they reach the real world.

This model shows how to close that gap—without changing how we build.

Who this is for:

Product leaders scaling systems to millions of users

Security & risk teams thinking beyond technical vulnerabilities

Executives responsible for real-world outcomes, not just delivery

The Meta ruling in New Mexico didn’t just make headlines, it exposed something the tech industry can no longer ignore.

We’ve mastered building systems that work. We’ve matured how we secure them. We’ve created frameworks to govern them.

And still… we’re being caught off guard by what they do once they scale.

Not because we lack intelligence. But because we’ve been asking incomplete questions.

For years, the industry standard has been: “Will this system be secure?”.

But at scale, the real question becomes: “What happens when millions of humans interact with this system?”.

We Didn’t Get It Wrong. We Just Didn’t Go Far Enough.

Let’s be clear: the industry has built powerful frameworks. Each of them plays a critical role. But they weren’t built to measure what happens after people interact with these systems in the real world. When you look at them side by side, the pattern becomes clear.

We’ve built systems to answer one question: “Is it secure?”

We haven’t built systems to answer the question that actually matters: “What happens next?”

The Pattern We Can’t Ignore

Across every framework, the same gap shows up.

To see this more clearly, it helps to break these frameworks down by what they were designed to address, and what they leave unaddressed.

Across each one, the same pattern begins to emerge.

Area

What Doesn’t Change

What Expands

Accountability for downstream impact that falls outside defined regulatory requirementsCompliance Risk (NIST Privacy Framework)Usability / UX (Interaction Design Foundation)Strong governance structures and regulatory controlsWell-established design practices focused on ease of use and accessibilityRisk extends into human behaviorSecurity Risk (OWASP)SDLC remains consistentEvaluation of whether ease of use increases harm, dependency, misuse, or negative behavioral outcomesNot operationalized into repeatable, day-to-day product development workflowsEthical Principles (IEEE 2089)Clearly defined ethical frameworks and guidanceNot tied to measurable outcomes or enforced across the product lifecycleTrust & Transparency (OECD AI Principles)Growing emphasis on transparency, fairness, and accountabilityNo consistent, testable, or repeatable methods embedded in the product lifecycle to anticipate, simulate, and mitigate behavioral harm at scaleBehavioral Harm (Partnerships on AI)Acknowledged in research and guidance, but inconsistently defined and not standardized across product teamsNo integration into product development lifecycle, testing, or monitoring processesPsychological Impact (WHO Digital Health Guidance)Recognized as a concern in broader research and policy discussionsNo clear ownership model or accountability within product teamsSocietal Downstream Effects (UNESCO AI Ethics)Addressed at a policy and principle levelFocuses on adversarial and security-driven misuse, but does not extend to modeling everyday user behavior, unintended misuse, or proactive simulation within the product development lifecycleProduct Misuse at Scale (MITRE ATLAS)Provides structured, real-world patterns of how AI systems can be exploited, misused, or manipulated by adversariesNot modeled or incorporated into product design and decision-making processesIndirect / second-order harm (AI Now Institute)Increasingly studied in research contextsNo standardized, product-level mechanisms to measure, track, and respond to cumulative human impact across real-world usage over timeLong-term cumulative impact (ISO/IEC JTC 1/SC 42 – AI Standards)Addressed within continuous risk management and lifecycle guidance, with emphasis on monitoring and risk over timeWhere Current Models

Fall Short

Each framework solves a piece of the problem, but none connect it all.

These frameworks aren’t broken—they’re incomplete when it comes to understanding human impact at scale.

They were designed to solve specific classes of risk, not the system as a whole.

Where It Falls Short

Framework

What It Gets Right

Focuses on system integrity and vulnerabilities, without addressing human behavior, misuse patterns, or downstream impact at scaleSDLC / Secure SDLC (OWASP)Provides structure, security rigor, and repeatable development practicesDefines principles but lacks consistent, testable integration into product development workflowsResponsible AI (IEEE P7000)Introduces ethical consideration early in the design processFocuses on trust signals rather than measuring or mitigating behavioral and societal consequencesTrust by Design (OECD AI Principles)Emphasizes transparency, accountability, and user trustCenters user interaction, but does not account for long-term behavioral, psychological, or societal impactHuman-Centered Design (Interaction Design Foundation)Optimizes usability, accessibility, and user experiencePrioritizes security and compliance automation without addressing human impact, misuse, or second-order effectsDevSecOps (DevSecOps Foundation)Integrates security continuously into development and deployment pipelinesPrimarily oriented toward system and organizational risk, not cumulative human or societal impact over timeRisk Management Frameworks (NIST AI Framework)Provides structured risk identification, assessment, and continuous monitoringOptimizes service reliability, not human behavioral or societal impactITIL (IT Infrastructure Library)Defines how IT services are delivered and supported at scaleOriented toward technical and organizational risk, without modeling behavioral impact, user interaction patterns, or downstream societal effectsNIST Cybersecurity Framework (CSF)Establishes a comprehensive model for identifying, protecting, detecting, responding to, and recovering from system-level risksFocuses on organizational governance and control, but does not account for how products influence user behavior, misuse patterns, or long-term societal impactCOBIT (ISACA)Establishes strong governance, control, and accountability models to align IT systems with business objectives and regulatory expectationsFocuses on protecting data and systems, but does not address how products influence user behavior, misuse patterns, or long-term human and societal impactISO/IEC 27001 (ISO)Provides a globally recognized standard for establishing, managing, and continuously improving information security management systemsSo what does this mean in practice?

It means the gap isn’t in awareness.

It’s in execution.

We don’t need more principles.

We need a way to apply them consistently, repeatably, and at scale.

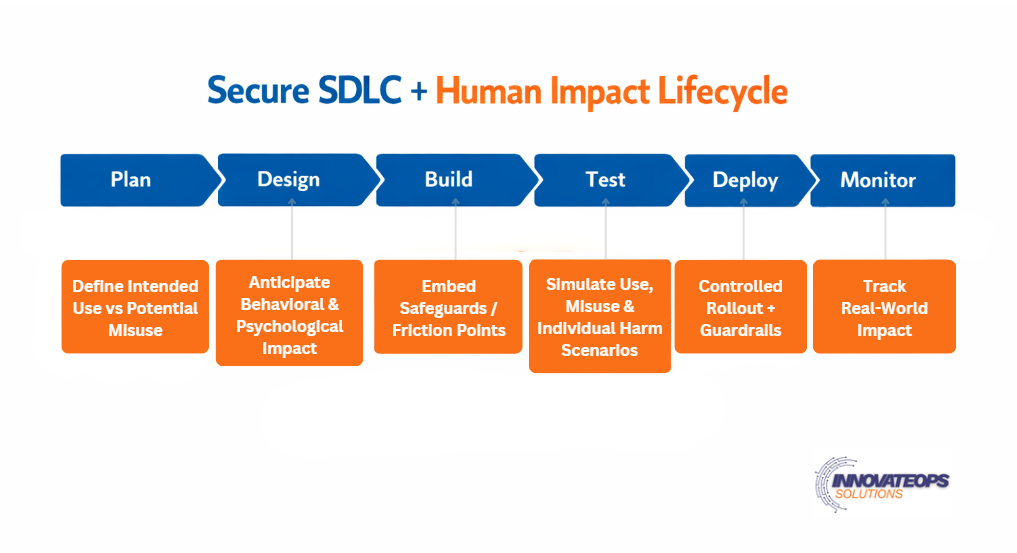

This is what it looks like when human impact is built into how products are developed—not added after the fact.

The same lifecycle teams already use, expanded to account for what happens when systems meet real people at real scale.

Because that’s where things start to break.

After everything looks correct, and no one is looking anymore.

How to Read This Model

The top layer is the Secure SDLC—unchanged

The bottom layer introduces human impact considerations at each phase

Each stage expands from technical risk → real-world human outcomes

This is not a new process—it’s an extension of existing workflows

This isn’t about introducing a new process,

it’s about expanding how we think within the one we already use.

The lifecycle stays the same. The expectations within each phase evolve.

What changes is what we consider, what we test for,

and what we measure once systems are in the real world.

What Actually Changes

Planning now considers misuse, not just intended use

Design includes behavioral and psychological impact

Testing evaluates harm scenarios, not just system defects

Deployment introduces guardrails, not just release readiness

Monitoring tracks real-world impact, not just performance

What Doesn’t Change

Same SDLC phases

Same teams and workflows

Same delivery expectations

What Expands

The risks we anticipate

The scenarios we simulate

The responsibility we carry after launch

If your lifecycle ends at deployment, you’re not measuring impact,

you’re guessing.

What changes here isn’t theoretical. It’s operational.

It shows up in what you plan for.

What you test for.

And what you take responsibility for after launch.

And once you see it, you can’t unsee it.